Hungarian Method

The Hungarian method is a computational optimization technique that addresses the assignment problem in polynomial time and foreshadows following primal-dual alternatives. In 1955, Harold Kuhn used the term “Hungarian method” to honour two Hungarian mathematicians, Dénes Kőnig and Jenő Egerváry. Let’s go through the steps of the Hungarian method with the help of a solved example.

Hungarian Method to Solve Assignment Problems

The Hungarian method is a simple way to solve assignment problems. Let us first discuss the assignment problems before moving on to learning the Hungarian method.

What is an Assignment Problem?

A transportation problem is a type of assignment problem. The goal is to allocate an equal amount of resources to the same number of activities. As a result, the overall cost of allocation is minimised or the total profit is maximised.

Because available resources such as workers, machines, and other resources have varying degrees of efficiency for executing different activities, and hence the cost, profit, or loss of conducting such activities varies.

Assume we have ‘n’ jobs to do on ‘m’ machines (i.e., one job to one machine). Our goal is to assign jobs to machines for the least amount of money possible (or maximum profit). Based on the notion that each machine can accomplish each task, but at variable levels of efficiency.

Hungarian Method Steps

Check to see if the number of rows and columns are equal; if they are, the assignment problem is considered to be balanced. Then go to step 1. If it is not balanced, it should be balanced before the algorithm is applied.

Step 1 – In the given cost matrix, subtract the least cost element of each row from all the entries in that row. Make sure that each row has at least one zero.

Step 2 – In the resultant cost matrix produced in step 1, subtract the least cost element in each column from all the components in that column, ensuring that each column contains at least one zero.

Step 3 – Assign zeros

- Analyse the rows one by one until you find a row with precisely one unmarked zero. Encircle this lonely unmarked zero and assign it a task. All other zeros in the column of this circular zero should be crossed out because they will not be used in any future assignments. Continue in this manner until you’ve gone through all of the rows.

- Examine the columns one by one until you find one with precisely one unmarked zero. Encircle this single unmarked zero and cross any other zero in its row to make an assignment to it. Continue until you’ve gone through all of the columns.

Step 4 – Perform the Optimal Test

- The present assignment is optimal if each row and column has exactly one encircled zero.

- The present assignment is not optimal if at least one row or column is missing an assignment (i.e., if at least one row or column is missing one encircled zero). Continue to step 5. Subtract the least cost element from all the entries in each column of the final cost matrix created in step 1 and ensure that each column has at least one zero.

Step 5 – Draw the least number of straight lines to cover all of the zeros as follows:

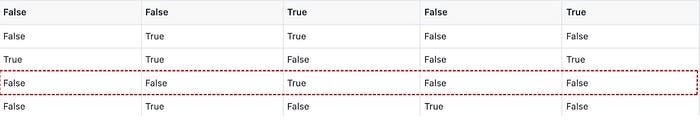

(a) Highlight the rows that aren’t assigned.

(b) Label the columns with zeros in marked rows (if they haven’t already been marked).

(c) Highlight the rows that have assignments in indicated columns (if they haven’t previously been marked).

(d) Continue with (b) and (c) until no further marking is needed.

(f) Simply draw the lines through all rows and columns that are not marked. If the number of these lines equals the order of the matrix, then the solution is optimal; otherwise, it is not.

Step 6 – Find the lowest cost factor that is not covered by the straight lines. Subtract this least-cost component from all the uncovered elements and add it to all the elements that are at the intersection of these straight lines, but leave the rest of the elements alone.

Step 7 – Continue with steps 1 – 6 until you’ve found the highest suitable assignment.

Hungarian Method Example

Use the Hungarian method to solve the given assignment problem stated in the table. The entries in the matrix represent each man’s processing time in hours.

\(\begin{array}{l}\begin{bmatrix} & I & II & III & IV & V \\1 & 20 & 15 & 18 & 20 & 25 \\2 & 18 & 20 & 12 & 14 & 15 \\3 & 21 & 23 & 25 & 27 & 25 \\4 & 17 & 18 & 21 & 23 & 20 \\5 & 18 & 18 & 16 & 19 & 20 \\\end{bmatrix}\end{array} \)

With 5 jobs and 5 men, the stated problem is balanced.

\(\begin{array}{l}A = \begin{bmatrix}20 & 15 & 18 & 20 & 25 \\18 & 20 & 12 & 14 & 15 \\21 & 23 & 25 & 27 & 25 \\17 & 18 & 21 & 23 & 20 \\18 & 18 & 16 & 19 & 20 \\\end{bmatrix}\end{array} \)

Subtract the lowest cost element in each row from all of the elements in the given cost matrix’s row. Make sure that each row has at least one zero.

\(\begin{array}{l}A = \begin{bmatrix}5 & 0 & 3 & 5 & 10 \\6 & 8 & 0 & 2 & 3 \\0 & 2 & 4 & 6 & 4 \\0 & 1 & 4 & 6 & 3 \\2 & 2 & 0 & 3 & 4 \\\end{bmatrix}\end{array} \)

Subtract the least cost element in each Column from all of the components in the given cost matrix’s Column. Check to see if each column has at least one zero.

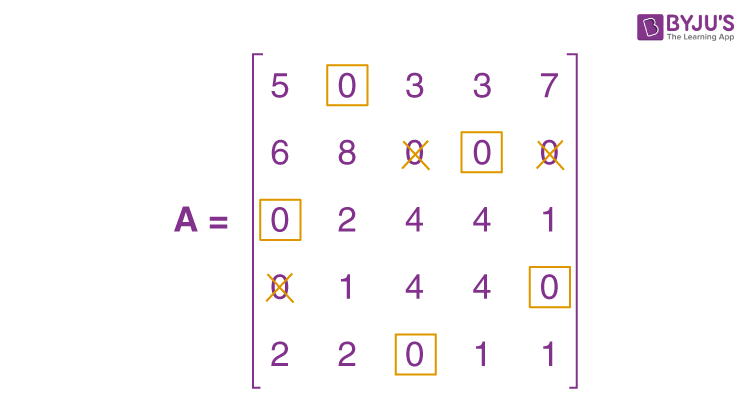

\(\begin{array}{l}A = \begin{bmatrix}5 & 0 & 3 & 3 & 7 \\6 & 8 & 0 & 0 & 0 \\0 & 2 & 4 & 4 & 1 \\0 & 1 & 4 & 4 & 0 \\2 & 2 & 0 & 1 & 1 \\\end{bmatrix}\end{array} \)

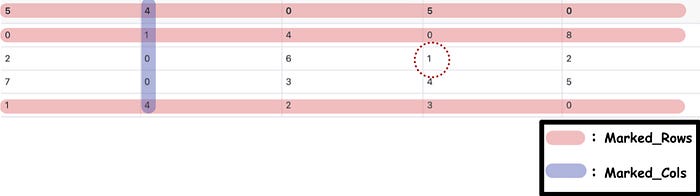

When the zeros are assigned, we get the following:

The present assignment is optimal because each row and column contain precisely one encircled zero.

Where 1 to II, 2 to IV, 3 to I, 4 to V, and 5 to III are the best assignments.

Hence, z = 15 + 14 + 21 + 20 + 16 = 86 hours is the optimal time.

Practice Question on Hungarian Method

Use the Hungarian method to solve the following assignment problem shown in table. The matrix entries represent the time it takes for each job to be processed by each machine in hours.

\(\begin{array}{l}\begin{bmatrix}J/M & I & II & III & IV & V \\1 & 9 & 22 & 58 & 11 & 19 \\2 & 43 & 78 & 72 & 50 & 63 \\3 & 41 & 28 & 91 & 37 & 45 \\4 & 74 & 42 & 27 & 49 & 39 \\5 & 36 & 11 & 57 & 22 & 25 \\\end{bmatrix}\end{array} \)

Stay tuned to BYJU’S – The Learning App and download the app to explore all Maths-related topics.

Frequently Asked Questions on Hungarian Method

What is hungarian method.

The Hungarian method is defined as a combinatorial optimization technique that solves the assignment problems in polynomial time and foreshadowed subsequent primal–dual approaches.

What are the steps involved in Hungarian method?

The following is a quick overview of the Hungarian method: Step 1: Subtract the row minima. Step 2: Subtract the column minimums. Step 3: Use a limited number of lines to cover all zeros. Step 4: Add some more zeros to the equation.

What is the purpose of the Hungarian method?

When workers are assigned to certain activities based on cost, the Hungarian method is beneficial for identifying minimum costs.

Leave a Comment Cancel reply

Your Mobile number and Email id will not be published. Required fields are marked *

Request OTP on Voice Call

Post My Comment

Register with BYJU'S & Download Free PDFs

Register with byju's & watch live videos.

- Practice Mathematical Algorithm

- Mathematical Algorithms

- Pythagorean Triplet

- Fibonacci Number

- Euclidean Algorithm

- LCM of Array

- GCD of Array

- Binomial Coefficient

- Catalan Numbers

- Sieve of Eratosthenes

- Euler Totient Function

- Modular Exponentiation

- Modular Multiplicative Inverse

- Stein's Algorithm

- Juggler Sequence

- Chinese Remainder Theorem

- Quiz on Fibonacci Numbers

Hungarian Algorithm for Assignment Problem | Set 2 (Implementation)

Given a 2D array , arr of size N*N where arr[i][j] denotes the cost to complete the j th job by the i th worker. Any worker can be assigned to perform any job. The task is to assign the jobs such that exactly one worker can perform exactly one job in such a way that the total cost of the assignment is minimized.

Input: arr[][] = {{3, 5}, {10, 1}} Output: 4 Explanation: The optimal assignment is to assign job 1 to the 1st worker, job 2 to the 2nd worker. Hence, the optimal cost is 3 + 1 = 4. Input: arr[][] = {{2500, 4000, 3500}, {4000, 6000, 3500}, {2000, 4000, 2500}} Output: 4 Explanation: The optimal assignment is to assign job 2 to the 1st worker, job 3 to the 2nd worker and job 1 to the 3rd worker. Hence, the optimal cost is 4000 + 3500 + 2000 = 9500.

Different approaches to solve this problem are discussed in this article .

Approach: The idea is to use the Hungarian Algorithm to solve this problem. The algorithm is as follows:

- For each row of the matrix, find the smallest element and subtract it from every element in its row.

- Repeat the step 1 for all columns.

- Cover all zeros in the matrix using the minimum number of horizontal and vertical lines.

- Test for Optimality : If the minimum number of covering lines is N , an optimal assignment is possible. Else if lines are lesser than N , an optimal assignment is not found and must proceed to step 5.

- Determine the smallest entry not covered by any line. Subtract this entry from each uncovered row, and then add it to each covered column. Return to step 3.

Consider an example to understand the approach:

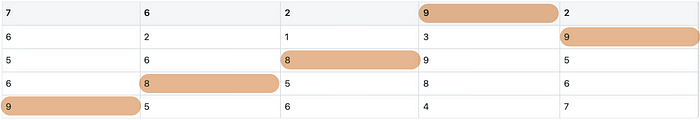

Let the 2D array be: 2500 4000 3500 4000 6000 3500 2000 4000 2500 Step 1: Subtract minimum of every row. 2500, 3500 and 2000 are subtracted from rows 1, 2 and 3 respectively. 0 1500 1000 500 2500 0 0 2000 500 Step 2: Subtract minimum of every column. 0, 1500 and 0 are subtracted from columns 1, 2 and 3 respectively. 0 0 1000 500 1000 0 0 500 500 Step 3: Cover all zeroes with minimum number of horizontal and vertical lines. Step 4: Since we need 3 lines to cover all zeroes, the optimal assignment is found. 2500 4000 3500 4000 6000 3500 2000 4000 2500 So the optimal cost is 4000 + 3500 + 2000 = 9500

For implementing the above algorithm, the idea is to use the max_cost_assignment() function defined in the dlib library . This function is an implementation of the Hungarian algorithm (also known as the Kuhn-Munkres algorithm) which runs in O(N 3 ) time. It solves the optimal assignment problem.

Below is the implementation of the above approach:

Time Complexity: O(N 3 ) Auxiliary Space: O(N 2 )

Similar Reads

- Mathematical

Please Login to comment...

Improve your coding skills with practice.

What kind of Experience do you want to share?

- Implementation of the Hungarian algorithm

- Connection to the Successive Shortest Path Algorithm

- Task examples

- Practice Problems

Hungarian algorithm for solving the assignment problem ¶

Statement of the assignment problem ¶.

There are several standard formulations of the assignment problem (all of which are essentially equivalent). Here are some of them:

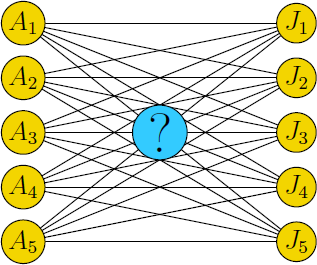

There are $n$ jobs and $n$ workers. Each worker specifies the amount of money they expect for a particular job. Each worker can be assigned to only one job. The objective is to assign jobs to workers in a way that minimizes the total cost.

Given an $n \times n$ matrix $A$ , the task is to select one number from each row such that exactly one number is chosen from each column, and the sum of the selected numbers is minimized.

Given an $n \times n$ matrix $A$ , the task is to find a permutation $p$ of length $n$ such that the value $\sum A[i]\left[p[i]\right]$ is minimized.

Consider a complete bipartite graph with $n$ vertices per part, where each edge is assigned a weight. The objective is to find a perfect matching with the minimum total weight.

It is important to note that all the above scenarios are " square " problems, meaning both dimensions are always equal to $n$ . In practice, similar " rectangular " formulations are often encountered, where $n$ is not equal to $m$ , and the task is to select $\min(n,m)$ elements. However, it can be observed that a "rectangular" problem can always be transformed into a "square" problem by adding rows or columns with zero or infinite values, respectively.

We also note that by analogy with the search for a minimum solution, one can also pose the problem of finding a maximum solution. However, these two problems are equivalent to each other: it is enough to multiply all the weights by $-1$ .

Hungarian algorithm ¶

Historical reference ¶.

The algorithm was developed and published by Harold Kuhn in 1955. Kuhn himself gave it the name "Hungarian" because it was based on the earlier work by Hungarian mathematicians Dénes Kőnig and Jenő Egerváry. In 1957, James Munkres showed that this algorithm runs in (strictly) polynomial time, independently from the cost. Therefore, in literature, this algorithm is known not only as the "Hungarian", but also as the "Kuhn-Mankres algorithm" or "Mankres algorithm". However, it was recently discovered in 2006 that the same algorithm was invented a century before Kuhn by the German mathematician Carl Gustav Jacobi . His work, About the research of the order of a system of arbitrary ordinary differential equations , which was published posthumously in 1890, contained, among other findings, a polynomial algorithm for solving the assignment problem. Unfortunately, since the publication was in Latin, it went unnoticed among mathematicians.

It is also worth noting that Kuhn's original algorithm had an asymptotic complexity of $\mathcal{O}(n^4)$ , and only later Jack Edmonds and Richard Karp (and independently Tomizawa ) showed how to improve it to an asymptotic complexity of $\mathcal{O}(n^3)$ .

The $\mathcal{O}(n^4)$ algorithm ¶

To avoid ambiguity, we note right away that we are mainly concerned with the assignment problem in a matrix formulation (i.e., given a matrix $A$ , you need to select $n$ cells from it that are in different rows and columns). We index arrays starting with $1$ , i.e., for example, a matrix $A$ has indices $A[1 \dots n][1 \dots n]$ .

We will also assume that all numbers in matrix A are non-negative (if this is not the case, you can always make the matrix non-negative by adding some constant to all numbers).

Let's call a potential two arbitrary arrays of numbers $u[1 \ldots n]$ and $v[1 \ldots n]$ , such that the following condition is satisfied:

(As you can see, $u[i]$ corresponds to the $i$ -th row, and $v[j]$ corresponds to the $j$ -th column of the matrix).

Let's call the value $f$ of the potential the sum of its elements:

On one hand, it is easy to see that the cost of the desired solution $sol$ is not less than the value of any potential.

Lemma. $sol\geq f.$

The desired solution of the problem consists of $n$ cells of the matrix $A$ , so $u[i]+v[j]\leq A[i][j]$ for each of them. Since all the elements in $sol$ are in different rows and columns, summing these inequalities over all the selected $A[i][j]$ , you get $f$ on the left side of the inequality, and $sol$ on the right side.

On the other hand, it turns out that there is always a solution and a potential that turns this inequality into equality . The Hungarian algorithm described below will be a constructive proof of this fact. For now, let's just pay attention to the fact that if any solution has a cost equal to any potential, then this solution is optimal .

Let's fix some potential. Let's call an edge $(i,j)$ rigid if $u[i]+v[j]=A[i][j].$

Recall an alternative formulation of the assignment problem, using a bipartite graph. Denote with $H$ a bipartite graph composed only of rigid edges. The Hungarian algorithm will maintain, for the current potential, the maximum-number-of-edges matching $M$ of the graph $H$ . As soon as $M$ contains $n$ edges, then the solution to the problem will be just $M$ (after all, it will be a solution whose cost coincides with the value of a potential).

Let's proceed directly to the description of the algorithm .

Step 1. At the beginning, the potential is assumed to be zero ( $u[i]=v[i]=0$ for all $i$ ), and the matching $M$ is assumed to be empty.

Step 2. Further, at each step of the algorithm, we try, without changing the potential, to increase the cardinality of the current matching $M$ by one (recall that the matching is searched in the graph of rigid edges $H$ ). To do this, the usual Kuhn Algorithm for finding the maximum matching in bipartite graphs is used. Let us recall the algorithm here. All edges of the matching $M$ are oriented in the direction from the right part to the left one, and all other edges of the graph $H$ are oriented in the opposite direction.

Recall (from the terminology of searching for matchings) that a vertex is called saturated if an edge of the current matching is adjacent to it. A vertex that is not adjacent to any edge of the current matching is called unsaturated. A path of odd length, in which the first edge does not belong to the matching, and for all subsequent edges there is an alternating belonging to the matching (belongs/does not belong) - is called an augmenting path. From all unsaturated vertices in the left part, a depth-first or breadth-first traversal is started. If, as a result of the search, it was possible to reach an unsaturated vertex of the right part, we have found an augmenting path from the left part to the right one. If we include odd edges of the path and remove the even ones in the matching (i.e. include the first edge in the matching, exclude the second, include the third, etc.), then we will increase the matching cardinality by one.

If there was no augmenting path, then the current matching $M$ is maximal in the graph $H$ .

Step 3. If at the current step, it is not possible to increase the cardinality of the current matching, then a recalculation of the potential is performed in such a way that, at the next steps, there will be more opportunities to increase the matching.

Denote by $Z_1$ the set of vertices of the left part that were visited during the last traversal of Kuhn's algorithm, and through $Z_2$ the set of visited vertices of the right part.

Let's calculate the value $\Delta$ :

Lemma. $\Delta > 0.$

Suppose $\Delta=0$ . Then there exists a rigid edge $(i,j)$ with $i\in Z_1$ and $j\notin Z_2$ . It follows that the edge $(i,j)$ must be oriented from the right part to the left one, i.e. $(i,j)$ must be included in the matching $M$ . However, this is impossible, because we could not get to the saturated vertex $i$ except by going along the edge from j to i. So $\Delta > 0$ .

Now let's recalculate the potential in this way:

for all vertices $i\in Z_1$ , do $u[i] \gets u[i]+\Delta$ ,

for all vertices $j\in Z_2$ , do $v[j] \gets v[j]-\Delta$ .

Lemma. The resulting potential is still a correct potential.

We will show that, after recalculation, $u[i]+v[j]\leq A[i][j]$ for all $i,j$ . For all the elements of $A$ with $i\in Z_1$ and $j\in Z_2$ , the sum $u[i]+v[j]$ does not change, so the inequality remains true. For all the elements with $i\notin Z_1$ and $j\in Z_2$ , the sum $u[i]+v[j]$ decreases by $\Delta$ , so the inequality is still true. For the other elements whose $i\in Z_1$ and $j\notin Z_2$ , the sum increases, but the inequality is still preserved, since the value $\Delta$ is, by definition, the maximum increase that does not change the inequality.

Lemma. The old matching $M$ of rigid edges is valid, i.e. all edges of the matching will remain rigid.

For some rigid edge $(i,j)$ to stop being rigid as a result of a change in potential, it is necessary that equality $u[i] + v[j] = A[i][j]$ turns into inequality $u[i] + v[j] < A[i][j]$ . However, this can happen only when $i \notin Z_1$ and $j \in Z_2$ . But $i \notin Z_1$ implies that the edge $(i,j)$ could not be a matching edge.

Lemma. After each recalculation of the potential, the number of vertices reachable by the traversal, i.e. $|Z_1|+|Z_2|$ , strictly increases.

First, note that any vertex that was reachable before recalculation, is still reachable. Indeed, if some vertex is reachable, then there is some path from reachable vertices to it, starting from the unsaturated vertex of the left part; since for edges of the form $(i,j),\ i\in Z_1,\ j\in Z_2$ the sum $u[i]+v[j]$ does not change, this entire path will be preserved after changing the potential. Secondly, we show that after a recalculation, at least one new vertex will be reachable. This follows from the definition of $\Delta$ : the edge $(i,j)$ which $\Delta$ refers to will become rigid, so vertex $j$ will be reachable from vertex $i$ .

Due to the last lemma, no more than $n$ potential recalculations can occur before an augmenting path is found and the matching cardinality of $M$ is increased. Thus, sooner or later, a potential that corresponds to a perfect matching $M^*$ will be found, and $M^*$ will be the answer to the problem. If we talk about the complexity of the algorithm, then it is $\mathcal{O}(n^4)$ : in total there should be at most $n$ increases in matching, before each of which there are no more than $n$ potential recalculations, each of which is performed in time $\mathcal{O}(n^2)$ .

We will not give the implementation for the $\mathcal{O}(n^4)$ algorithm here, since it will turn out to be no shorter than the implementation for the $\mathcal{O}(n^3)$ one, described below.

The $\mathcal{O}(n^3)$ algorithm ¶

Now let's learn how to implement the same algorithm in $\mathcal{O}(n^3)$ (for rectangular problems $n \times m$ , $\mathcal{O}(n^2m)$ ).

The key idea is to consider matrix rows one by one , and not all at once. Thus, the algorithm described above will take the following form:

Consider the next row of the matrix $A$ .

While there is no increasing path starting in this row, recalculate the potential.

As soon as an augmenting path is found, propagate the matching along it (thus including the last edge in the matching), and restart from step 1 (to consider the next line).

To achieve the required complexity, it is necessary to implement steps 2-3, which are performed for each row of the matrix, in time $\mathcal{O}(n^2)$ (for rectangular problems in $\mathcal{O}(nm)$ ).

To do this, recall two facts proved above:

With a change in the potential, the vertices that were reachable by Kuhn's traversal will remain reachable.

In total, only $\mathcal{O}(n)$ recalculations of the potential could occur before an augmenting path was found.

From this follow these key ideas that allow us to achieve the required complexity:

To check for the presence of an augmenting path, there is no need to start the Kuhn traversal again after each potential recalculation. Instead, you can make the Kuhn traversal in an iterative form : after each recalculation of the potential, look at the added rigid edges and, if their left ends were reachable, mark their right ends reachable as well and continue the traversal from them.

Developing this idea further, we can present the algorithm as follows: at each step of the loop, the potential is recalculated. Subsequently, a column that has become reachable is identified (which will always exist as new reachable vertices emerge after every potential recalculation). If the column is unsaturated, an augmenting chain is discovered. Conversely, if the column is saturated, the matching row also becomes reachable.

To quickly recalculate the potential (faster than the $\mathcal{O}(n^2)$ naive version), you need to maintain auxiliary minima for each of the columns:

$minv[j]=\min_{i\in Z_1} A[i][j]-u[i]-v[j].$

It's easy to see that the desired value $\Delta$ is expressed in terms of them as follows:

$\Delta=\min_{j\notin Z_2} minv[j].$

Thus, finding $\Delta$ can now be done in $\mathcal{O}(n)$ .

It is necessary to update the array $minv$ when new visited rows appear. This can be done in $\mathcal{O}(n)$ for the added row (which adds up over all rows to $\mathcal{O}(n^2)$ ). It is also necessary to update the array $minv$ when recalculating the potential, which is also done in time $\mathcal{O}(n)$ ( $minv$ changes only for columns that have not yet been reached: namely, it decreases by $\Delta$ ).

Thus, the algorithm takes the following form: in the outer loop, we consider matrix rows one by one. Each row is processed in time $\mathcal{O}(n^2)$ , since only $\mathcal{O}(n)$ potential recalculations could occur (each in time $\mathcal{O}(n)$ ), and the array $minv$ is maintained in time $\mathcal{O}(n^2)$ ; Kuhn's algorithm will work in time $\mathcal{O}(n^2)$ (since it is presented in the form of $\mathcal{O}(n)$ iterations, each of which visits a new column).

The resulting complexity is $\mathcal{O}(n^3)$ or, if the problem is rectangular, $\mathcal{O}(n^2m)$ .

Implementation of the Hungarian algorithm ¶

The implementation below was developed by Andrey Lopatin several years ago. It is distinguished by amazing conciseness: the entire algorithm consists of 30 lines of code .

The implementation finds a solution for the rectangular matrix $A[1\dots n][1\dots m]$ , where $n\leq m$ . The matrix is 1-based for convenience and code brevity: this implementation introduces a dummy zero row and zero column, which allows us to write many cycles in a general form, without additional checks.

Arrays $u[0 \ldots n]$ and $v[0 \ldots m]$ store potential. Initially, they are set to zero, which is consistent with a matrix of zero rows (Note that it is unimportant for this implementation whether or not the matrix $A$ contains negative numbers).

The array $p[0 \ldots m]$ contains a matching: for each column $j = 1 \ldots m$ , it stores the number $p[j]$ of the selected row (or $0$ if nothing has been selected yet). For the convenience of implementation, $p[0]$ is assumed to be equal to the number of the current row.

The array $minv[1 \ldots m]$ contains, for each column $j$ , the auxiliary minima necessary for a quick recalculation of the potential, as described above.

The array $way[1 \ldots m]$ contains information about where these minimums are reached so that we can later reconstruct the augmenting path. Note that, to reconstruct the path, it is sufficient to store only column values, since the row numbers can be taken from the matching (i.e., from the array $p$ ). Thus, $way[j]$ , for each column $j$ , contains the number of the previous column in the path (or $0$ if there is none).

The algorithm itself is an outer loop through the rows of the matrix , inside which the $i$ -th row of the matrix is considered. The first do-while loop runs until a free column $j0$ is found. Each iteration of the loop marks visited a new column with the number $j0$ (calculated at the last iteration; and initially equal to zero - i.e. we start from a dummy column), as well as a new row $i0$ - adjacent to it in the matching (i.e. $p[j0]$ ; and initially when $j0=0$ the $i$ -th row is taken). Due to the appearance of a new visited row $i0$ , you need to recalculate the array $minv$ and $\Delta$ accordingly. If $\Delta$ is updated, then the column $j1$ becomes the minimum that has been reached (note that with such an implementation $\Delta$ could turn out to be equal to zero, which means that the potential cannot be changed at the current step: there is already a new reachable column). After that, the potential and the $minv$ array are recalculated. At the end of the "do-while" loop, we found an augmenting path ending in a column $j0$ that can be "unrolled" using the ancestor array $way$ .

The constant INF is "infinity", i.e. some number, obviously greater than all possible numbers in the input matrix $A$ .

To restore the answer in a more familiar form, i.e. finding for each row $i = 1 \ldots n$ the number $ans[i]$ of the column selected in it, can be done as follows:

The cost of the matching can simply be taken as the potential of the zero column (taken with the opposite sign). Indeed, as you can see from the code, $-v[0]$ contains the sum of all the values of $\Delta$ , i.e. total change in potential. Although several values of $u[i]$ and $v[j]$ could change at once, the total change in the potential is exactly equal to $\Delta$ , since until there is an augmenting path, the number of reachable rows is exactly one more than the number of the reachable columns (only the current row $i$ does not have a "pair" in the form of a visited column):

Connection to the Successive Shortest Path Algorithm ¶

The Hungarian algorithm can be seen as the Successive Shortest Path Algorithm , adapted for the assignment problem. Without going into the details, let's provide an intuition regarding the connection between them.

The Successive Path algorithm uses a modified version of Johnson's algorithm as reweighting technique. This one is divided into four steps:

- Use the Bellman-Ford algorithm, starting from the sink $s$ and, for each node, find the minimum weight $h(v)$ of a path from $s$ to $v$ .

For every step of the main algorithm:

- Reweight the edges of the original graph in this way: $w(u,v) \gets w(u,v)+h(u)-h(v)$ .

- Use Dijkstra 's algorithm to find the shortest-paths subgraph of the original network.

- Update potentials for the next iteration.

Given this description, we can observe that there is a strong analogy between $h(v)$ and potentials: it can be checked that they are equal up to a constant offset. In addition, it can be shown that, after reweighting, the set of all zero-weight edges represents the shortest-path subgraph where the main algorithm tries to increase the flow. This also happens in the Hungarian algorithm: we create a subgraph made of rigid edges (the ones for which the quantity $A[i][j]-u[i]-v[j]$ is zero), and we try to increase the size of the matching.

In step 4, all the $h(v)$ are updated: every time we modify the flow network, we should guarantee that the distances from the source are correct (otherwise, in the next iteration, Dijkstra's algorithm might fail). This sounds like the update performed on the potentials, but in this case, they are not equally incremented.

To deepen the understanding of potentials, refer to this article .

Task examples ¶

Here are a few examples related to the assignment problem, from very trivial to less obvious tasks:

Given a bipartite graph, it is required to find in it the maximum matching with the minimum weight (i.e., first of all, the size of the matching is maximized, and secondly, its cost is minimized). To solve it, we simply build an assignment problem, putting the number "infinity" in place of the missing edges. After that, we solve the problem with the Hungarian algorithm, and remove edges of infinite weight from the answer (they could enter the answer if the problem does not have a solution in the form of a perfect matching).

Given a bipartite graph, it is required to find in it the maximum matching with the maximum weight . The solution is again obvious, all weights must be multiplied by minus one.

The task of detecting moving objects in images : two images were taken, as a result of which two sets of coordinates were obtained. It is required to correlate the objects in the first and second images, i.e. determine for each point of the second image, which point of the first image it corresponded to. In this case, it is required to minimize the sum of distances between the compared points (i.e., we are looking for a solution in which the objects have taken the shortest path in total). To solve, we simply build and solve an assignment problem, where the weights of the edges are the Euclidean distances between points.

The task of detecting moving objects by locators : there are two locators that can't determine the position of an object in space, but only its direction. Both locators (located at different points) received information in the form of $n$ such directions. It is required to determine the position of objects, i.e. determine the expected positions of objects and their corresponding pairs of directions in such a way that the sum of distances from objects to direction rays is minimized. Solution: again, we simply build and solve the assignment problem, where the vertices of the left part are the $n$ directions from the first locator, the vertices of the right part are the $n$ directions from the second locator, and the weights of the edges are the distances between the corresponding rays.

Covering a directed acyclic graph with paths : given a directed acyclic graph, it is required to find the smallest number of paths (if equal, with the smallest total weight) so that each vertex of the graph lies in exactly one path. The solution is to build the corresponding bipartite graph from the given graph and find the maximum matching of the minimum weight in it. See separate article for more details.

Tree coloring book . Given a tree in which each vertex, except for leaves, has exactly $k-1$ children. It is required to choose for each vertex one of the $k$ colors available so that no two adjacent vertices have the same color. In addition, for each vertex and each color, the cost of painting this vertex with this color is known, and it is required to minimize the total cost. To solve this problem, we use dynamic programming. Namely, let's learn how to calculate the value $d[v][c]$ , where $v$ is the vertex number, $c$ is the color number, and the value $d[v][c]$ itself is the minimum cost needed to color all the vertices in the subtree rooted at $v$ , and the vertex $v$ itself with color $c$ . To calculate such a value $d[v][c]$ , it is necessary to distribute the remaining $k-1$ colors among the children of the vertex $v$ , and for this, it is necessary to build and solve the assignment problem (in which the vertices of the left part are colors, the vertices of the right part are children, and the weights of the edges are the corresponding values of $d$ ). Thus, each value $d[v][c]$ is calculated using the solution of the assignment problem, which ultimately gives the asymptotic $\mathcal{O}(nk^4)$ .

If, in the assignment problem, the weights are not on the edges, but on the vertices, and only on the vertices of the same part , then it's not necessary to use the Hungarian algorithm: just sort the vertices by weight and run the usual Kuhn algorithm (for more details, see a separate article ).

Consider the following special case . Let each vertex of the left part be assigned some number $\alpha[i]$ , and each vertex of the right part $\beta[j]$ . Let the weight of any edge $(i,j)$ be equal to $\alpha[i]\cdot \beta[j]$ (the numbers $\alpha[i]$ and $\beta[j]$ are known). Solve the assignment problem. To solve it without the Hungarian algorithm, we first consider the case when both parts have two vertices. In this case, as you can easily see, it is better to connect the vertices in the reverse order: connect the vertex with the smaller $\alpha[i]$ to the vertex with the larger $\beta[j]$ . This rule can be easily generalized to an arbitrary number of vertices: you need to sort the vertices of the first part in increasing order of $\alpha[i]$ values, the second part in decreasing order of $\beta[j]$ values, and connect the vertices in pairs in that order. Thus, we obtain a solution with complexity of $\mathcal{O}(n\log n)$ .

The Problem of Potentials . Given a matrix $A[1 \ldots n][1 \ldots m]$ , it is required to find two arrays $u[1 \ldots n]$ and $v[1 \ldots m]$ such that, for any $i$ and $j$ , $u[i] + v[j] \leq a[i][j]$ and the sum of elements of arrays $u$ and $v$ is maximum. Knowing the Hungarian algorithm, the solution to this problem will not be difficult: the Hungarian algorithm just finds such a potential $u, v$ that satisfies the condition of the problem. On the other hand, without knowledge of the Hungarian algorithm, it seems almost impossible to solve such a problem.

This task is also called the dual problem of the assignment problem: minimizing the total cost of the assignment is equivalent to maximizing the sum of the potentials.

Literature ¶

Ravindra Ahuja, Thomas Magnanti, James Orlin. Network Flows [1993]

Harold Kuhn. The Hungarian Method for the Assignment Problem [1955]

James Munkres. Algorithms for Assignment and Transportation Problems [1957]

Practice Problems ¶

UVA - Crime Wave - The Sequel

UVA - Warehouse

SGU - Beloved Sons

UVA - The Great Wall Game

UVA - Jogging Trails

- alemini18 (92.97%)

- adamant-pwn (7.03%)

Hungarian Method Examples

Now we will examine a few highly simplified illustrations of Hungarian Method for solving an assignment problem .

Later in the chapter, you will find more practical versions of assignment models like Crew assignment problem , Travelling salesman problem , etc.

Example-1, Example-2

Example 1: Hungarian Method

The Funny Toys Company has four men available for work on four separate jobs. Only one man can work on any one job. The cost of assigning each man to each job is given in the following table. The objective is to assign men to jobs in such a way that the total cost of assignment is minimum.

This is a minimization example of assignment problem . We will use the Hungarian Algorithm to solve this problem.

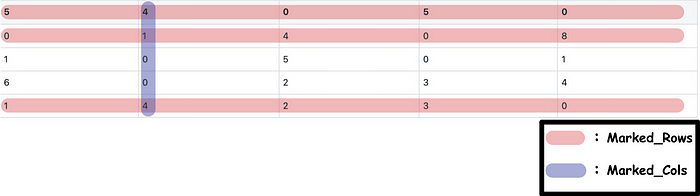

Identify the minimum element in each row and subtract it from every element of that row. The result is shown in the following table.

"A man has one hundred dollars and you leave him with two dollars, that's subtraction." -Mae West

On small screens, scroll horizontally to view full calculation

Identify the minimum element in each column and subtract it from every element of that column.

Make the assignments for the reduced matrix obtained from steps 1 and 2 in the following way:

- For every zero that becomes assigned, cross out (X) all other zeros in the same row and the same column.

- If for a row and a column, there are two or more zeros and one cannot be chosen by inspection, choose the cell arbitrarily for assignment.

An optimal assignment is found, if the number of assigned cells equals the number of rows (and columns). In case you have chosen a zero cell arbitrarily, there may be alternate optimal solutions. If no optimal solution is found, go to step 5.

Use Horizontal Scrollbar to View Full Table Calculation

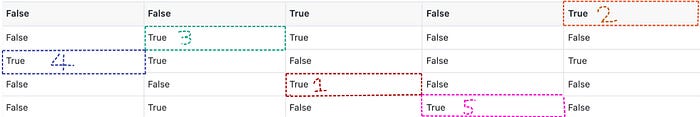

Draw the minimum number of vertical and horizontal lines necessary to cover all the zeros in the reduced matrix obtained from step 3 by adopting the following procedure:

- Mark all the rows that do not have assignments.

- Mark all the columns (not already marked) which have zeros in the marked rows.

- Mark all the rows (not already marked) that have assignments in marked columns.

- Repeat steps 5 (ii) and (iii) until no more rows or columns can be marked.

- Draw straight lines through all unmarked rows and marked columns.

You can also draw the minimum number of lines by inspection.

Select the smallest element (i.e., 1) from all the uncovered elements. Subtract this smallest element from all the uncovered elements and add it to the elements, which lie at the intersection of two lines. Thus, we obtain another reduced matrix for fresh assignment.

Now again make the assignments for the reduced matrix.

Final Table: Hungarian Method

Since the number of assignments is equal to the number of rows (& columns), this is the optimal solution.

The total cost of assignment = A1 + B4 + C2 + D3

Substituting values from original table: 20 + 17 + 17 + 24 = Rs. 78.

Share This Article

Operations Research Simplified Back Next

Goal programming Linear programming Simplex Method Transportation Problem

All Subjects

6.2 Assignment problem and Hungarian algorithm

2 min read • july 24, 2024

The assignment problem tackles matching tasks to agents efficiently. It's a special case of transportation problems, with one-to-one matching and equal-sized sets. The cost matrix represents assignment costs, and the goal is to minimize total cost.

The Hungarian algorithm solves assignment problems step-by-step. It creates a reduced cost matrix, finds zeros, and iteratively improves the solution. This method guarantees an optimal assignment , making it a powerful tool for solving real-world matching problems.

Assignment Problem and Hungarian Algorithm

Assignment problem as transportation subset, top images from around the web for assignment problem as transportation subset.

A New Approach of Solving Single Objective Unbalanced Assignment Problem View original

Is this image relevant?

A Comparative Study of Initial Basic Feasible Solution by a Least Cost Mean Method (LCMM) of ... View original

Proposed Heuristic Method for Solving Assignment Problems View original

- One-to-one matching between equal-sized sets assigns tasks to agents efficiently

- Supply nodes (agents) and demand nodes (tasks) all have values of 1

- Cost matrix represents assignment costs for each agent-task pair

- Balanced problem guarantees integer solution unlike general transportation problems

Linear programming for assignments

- Decision variables x i j x_{ij} x ij (1 if agent i assigned to task j, 0 otherwise) determine optimal assignments

- Objective function minimizes total assignment cost ∑ i = 1 n ∑ j = 1 n c i j x i j \sum_{i=1}^n \sum_{j=1}^n c_{ij}x_{ij} ∑ i = 1 n ∑ j = 1 n c ij x ij

- Constraints ensure each agent and task assigned once ∑ j = 1 n x i j = 1 \sum_{j=1}^n x_{ij} = 1 ∑ j = 1 n x ij = 1 , ∑ i = 1 n x i j = 1 \sum_{i=1}^n x_{ij} = 1 ∑ i = 1 n x ij = 1

- Non-negativity and binary constraints maintain feasibility x i j ≥ 0 x_{ij} \geq 0 x ij ≥ 0 , x i j ∈ { 0 , 1 } x_{ij} \in \{0,1\} x ij ∈ { 0 , 1 }

Hungarian algorithm application

- Subtract row minima from each row in cost matrix

- Subtract column minima from each column

- Draw minimum lines covering all zeros

- Create additional zeros by subtracting smallest uncovered element

- Find complete assignment using covered zeros

- Iterative process improves solution until optimal assignment found

- Optimality proof uses dual variables and complementary slackness conditions

Reduced cost matrices in assignments

- Subtracting row and column minima creates reduced cost matrix preserving optimal solution

- Non-negative elements with at least one zero per row and column

- Reduced costs show potential objective value improvements

- Zero reduced costs indicate potentially optimal assignments

- Simplifies optimal solution search in Hungarian algorithm

- Allows visual identification of potential assignments (matching zeros)

Key Terms to Review ( 18 ) Show as list

The assignment problem is a type of optimization problem where the goal is to assign resources to tasks in the most efficient way possible. It typically involves a cost matrix that quantifies the cost associated with each potential assignment, and the objective is to minimize the total cost or maximize the overall effectiveness of the assignments. This problem is prevalent in various fields such as logistics, scheduling, and resource allocation.

Card 1 of 18

© 2024 Fiveable Inc. All rights reserved.

Ap® and sat® are trademarks registered by the college board, which is not affiliated with, and does not endorse this website., 6.3 transshipment and minimum cost flow problems.

Hungarian Algorithm Introduction & Python Implementation

How to use hungarian method to resolve the linear assignment problem..

By Eason on 2021-08-02

In this article, I will introduce how to use Hungarian Method to resolve the linear assignment problem and provide my personal Python code solution.

So… What is the linear assignment problem?

The linear assignment problem represents the need to maximize the available resources (or minimize the expenditure) with limited resources. For instance, below is a 2D matrix, where each row represents a different supplier, and each column represents the cost of employing them to produce a particular product. Each supplier can only specialize in the production of one of these products. In other words, only one element can be selected for each column and row in the matrix, and the sum of the selected elements must be minimized (minimized cost expense).

The cost of producing different goods by different producers:

Indeed, this is a simple example. By trying out the possible combinations, we can see that the smallest sum is 13, so supplier A supplies Bubble Tea , supplier B supplies milk tea, and supplier C supplies Fruit Tea . However, such attempts do not follow a clear rule and become inefficient when applied to large tasks. Therefore, the next section will introduce step by step the Hungarian algorithm, which can be applied to the linear assignment problem.

Hungarian Algorithm & Python Code Step by Step

In this section, we will show how to use the Hungarian algorithm to solve linear assignment problems and find the minimum combinations in the matrix. Of course, the Hungarian algorithm can also be used to find the maximum combination.

Step 0. Prepare Operations

First, an N by N matrix is generated to be used for the Hungarian algorithm (Here, we use a 5 by 5 square matrix as an example).

The above code randomly generates a 5x5 cost matrix of integers between 0 and 10.

If we want to find the maximum sum, we could do the opposite. The matrix to be solved is regarded as the profit matrix, and the maximum value in the matrix is set as the common price of all goods. The cost matrix is obtained by subtracting the profit matrix from the maximum value. Finally, the cost matrix is substituted into the Hungarian algorithm to obtain the minimized combination and then remapped back to the profit matrix to obtain the maximized sum value and composition result.

The above code randomly generates a 5x5 profit matrix of integers between 0 and 10 and generate a corresponding cost matrix

By following the steps above, you can randomly generate either the cost matrix or the profit matrix. Next, we will move into the introduction of the Hungarian algorithm, and for the sake of illustration, the following sections will be illustrated using the cost matrix shown below. We will use the Hungarian algorithm to solve the linear assignment problem of the cost matrix and find the corresponding minimum sum.

Example cost matrix:

Step 1. Every column and every row subtract its internal minimum

First, every column and every row must subtract its internal minimum. After subtracting the minimum, the cost matrix will look like this.

Cost matrix after step 1:

And the current code is like this:

Step 2.1. Min_zero_row Function Implementation

At first, we need to find the row with the fewest zero elements. So, we can convert the previous matrix to the boolean matrix(0 → True, Others → False).

Transform matrix to boolean matrix:

Corresponding Boolean matrix:

Therefore, we can use the “min_zero_row” function to find the corresponding row.

The row which contains the least 0:

Third, mark any 0 elements on the corresponding row and clean up its row and column (converts elements on the Boolean matrix to False). The coordinates of the element are stored in mark_zero.

Hence, the boolean matrix will look like this:

The boolean matrix after the first process. The fourth row has been changed to all False.

The process is repeated several times until the elements in the boolean matrix are all False. The below picture shows the order in which they are marked.

The possible answer composition:

Step 2.2. Mark_matrix Function Implementation

After getting Zero_mat from the step 2–1, we can check it and mark the matrix according to certain rules. The whole rule can be broken down into several steps:

- Mark rows that do not contain marked 0 elements and store row indexes in the non_marked_row

- Search non_marked_row element, and find out if there are any unmarked 0 elements in the corresponding column

- Store the column indexes in the marked_cols

- Compare the column indexes stored in marked_zero and marked_cols

- If a matching column index exists, the corresponding row_index is saved to non_marked_rows

- Next, the row indexes that are not in non_marked_row are stored in marked_rows

Finally, the whole mark_matrx function is finished and then returns marked_zero , marked_rows , marked_cols. At this point, we will be able to decide the result based on the return information.

If we use the example cost matrix, the corresponding marked_zero , marked_rows, and marked_cols are as follows:

- marked_zero : [(3, 2), (0, 4), (1, 1), (2, 0), (4, 3)]

- marked_rows : [0, 1, 2, 3, 4]

- marked_cols : []

Step 3. Identify the Result

At this step, if the sum of the lengths of marked_rows and marked_cols is equal to the length of the cost matrix, it means that the solution of the linear assignment problem has been found successfully, and marked_zero stores the solution coordinates. Fortunately, in the example matrix, we find the answer on the first try. Therefore, we can skip to step 5 and calculate the solution.

However, everything is hardly plain sailing. Most of the time, we will not find the solution on the first try, such as the following matrix:

After Step 1 & 2 , the corresponding matrix, marked_rows, and marked_cols are as follows:

The sum of the lengths of Marked_Rows and Marked_Cols is 4 (less than 5).

Apparently, the sum of the lengths is less than the length of the matrix. At this time, we need to go into Step 4 to adjust the matrix.

Step 4. Adjust Matrix

In Step 4, we're going to put the matrix after Step 1 into the Adjust_Matrix function . Taking the latter matrix in Step 3 as an example, the matrix to be modified in Adjust_Matrix is:

The whole function can be separated into three steps:

- Find the minimum value for an element that is not in marked_rows and not in marked_cols . Hence, we can find the minimum value is 1.

- Subtract the elements which not in marked_rows nor marked_cols from the minimum values obtained in the previous step.

- Add the element in marked_rows , which is also in marked_cols , to the minimum value obtained by Step 4–1.

Return the adjusted matrix and repeat Step 2 and Step 3 until the conditions satisfy the requirement of entering Step 5.

Step 5. Calculate the Answer

Using the element composition stored in marked_zero , the minimum and maximum values of the linear assignment problem can be calculated.

The minimum composition of the assigned matrix and the minimum sum is 18.

The maximum composition of the assigned matrix and the maximum sum is 43.

The code of the Answer_Calculator function is as follows:

The complete code is as follows:

Hungarian algorithm - Wikipedia

Continue Learning

How to speed up pandas data operations using vectorized operations, simple way to extract images from docx files using python.

A guide to creating a straightforward and short program that could extract images from a picture.

Get a Random Boolean in Python

To generate random boolean values in Python, generate a random bit value, then convert it into boolean values using the bool() method.

16 Intermediate-Level Python Dictionary Tips Tricks and Shortcuts

Dictionaries are the heart and soul of Python. Here are the most essential tips and tricks for you to use with dictionaries.

Upload a File to Amazon S3 With Python

Boto3 aids you to navigate through the Amazon ecosystem

An Introduction to A* Algorithm in Python

Using A* Algorithm to find the BEST solution in a graph modeled problem

The Hungarian Method for the Assignment Problem

- First Online: 01 January 2009

Cite this chapter

- Harold W. Kuhn 9

10k Accesses

187 Citations

11 Altmetric

This paper has always been one of my favorite “children,” combining as it does elements of the duality of linear programming and combinatorial tools from graph theory. It may be of some interest to tell the story of its origin.

This is a preview of subscription content, log in via an institution to check access.

Access this chapter

Subscribe and save.

- Get 10 units per month

- Download Article/Chapter or eBook

- 1 Unit = 1 Article or 1 Chapter

- Cancel anytime

- Available as PDF

- Read on any device

- Instant download

- Own it forever

Tax calculation will be finalised at checkout

Purchases are for personal use only

Institutional subscriptions

Unable to display preview. Download preview PDF.

Similar content being viewed by others

On weighted means and their inequalities

Constrained Variational Optimization

The Alternating Least-Squares Algorithm for CDPCA

H.W. Kuhn, On the origin of the Hungarian Method , History of mathematical programming; a collection of personal reminiscences (J.K. Lenstra, A.H.G. Rinnooy Kan, and A. Schrijver, eds.), North Holland, Amsterdam, 1991, pp. 77–81.

Google Scholar

A. Schrijver, Combinatorial optimization: polyhedra and efficiency , Vol. A. Paths, Flows, Matchings, Springer, Berlin, 2003.

MATH Google Scholar

Download references

Author information

Authors and affiliations.

Princeton University, Princeton, USA

Harold W. Kuhn

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Harold W. Kuhn .

Editor information

Editors and affiliations.

Inst. Informatik, Universität Köln, Pohligstr. 1, Köln, 50969, Germany

Michael Jünger

Fac. Sciences de Base (FSB), Ecole Polytechnique Fédérale de Lausanne, Lausanne, 1015, Switzerland

Thomas M. Liebling

Ensimag, Institut Polytechnique de Grenoble, avenue Félix Viallet 46, Grenoble CX 1, 38031, France

Denis Naddef

School of Industrial &, Georgia Institute of Technology, Ferst Drive NW., 765, Atlanta, 30332-0205, USA

George L. Nemhauser

IBM Corporation, Route 100 294, Somers, 10589, USA

William R. Pulleyblank

Inst. Informatik, Universität Heidelberg, Im Neuenheimer Feld 326, Heidelberg, 69120, Germany

Gerhard Reinelt

ed Informatica, CNR - Ist. Analisi dei Sistemi, Viale Manzoni 30, Roma, 00185, Italy

Giovanni Rinaldi

Center for Operations Reserach &, Université Catholique de Louvain, voie du Roman Pays 34, Leuven, 1348, Belgium

Laurence A. Wolsey

Rights and permissions

Reprints and permissions

Copyright information

© 2010 Springer-Verlag Berlin Heidelberg

About this chapter

Kuhn, H.W. (2010). The Hungarian Method for the Assignment Problem. In: Jünger, M., et al. 50 Years of Integer Programming 1958-2008. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-68279-0_2

Download citation

DOI : https://doi.org/10.1007/978-3-540-68279-0_2

Published : 06 November 2009

Publisher Name : Springer, Berlin, Heidelberg

Print ISBN : 978-3-540-68274-5

Online ISBN : 978-3-540-68279-0

eBook Packages : Mathematics and Statistics Mathematics and Statistics (R0)

Share this chapter

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Publish with us

Policies and ethics

- Find a journal

- Track your research

Index Assignment problem Hungarian algorithm Solve online

The assignment problem

The assignment problem deals with assigning machines to tasks, workers to jobs, soccer players to positions, and so on. The goal is to determine the optimum assignment that, for example, minimizes the total cost or maximizes the team effectiveness.

Read more on the assignment problem

The Hungarian algorithm

The Hungarian algorithm is an easy to understand and easy to use algorithm that solves the assignment problem.

A step by step explanation of the algorithm

Solve your own problem online

HungarianAlgorithm.com © 2013-2024

IMAGES

VIDEO

COMMENTS

The Hungarian method is a combinatorial optimization algorithm that solves the assignment problem in polynomial time and which anticipated later primal-dual methods.It was developed and published in 1955 by Harold Kuhn, who gave it the name "Hungarian method" because the algorithm was largely based on the earlier works of two Hungarian mathematicians, Dénes Kőnig and Jenő Egerváry.

The Hungarian algorithm, aka Munkres assignment algorithm, utilizes the following theorem for polynomial runtime complexity (worst case O(n 3)) and guaranteed optimality: If a number is added to or subtracted from all of the entries of any one row or column of a cost matrix, then an optimal assignment for the resulting cost matrix is also an ...

The Hungarian method is a computational optimization technique that addresses the assignment problem in polynomial time and foreshadows following primal-dual alternatives. In 1955, Harold Kuhn used the term "Hungarian method" to honour two Hungarian mathematicians, Dénes Kőnig and Jenő Egerváry. Let's go through the steps of the Hungarian method with the help of a solved example.

Solve an assignment problem online. Fill in the cost matrix of an assignment problem and click on 'Solve'. The optimal assignment will be determined and a step by step explanation of the hungarian algorithm will be given. Fill in the cost matrix ():

The Hungarian algorithm: An example. We consider an example where four jobs (J1, J2, J3, and J4) need to be executed by four workers (W1, W2, W3, and W4), one job per worker. The matrix below shows the cost of assigning a certain worker to a certain job. The objective is to minimize the total cost of the assignment.

Also, our problem is a special case of binary integer linear programming problem (which is NP-hard). But, due to the specifics of the problem, there are more efficient algorithms to solve it. We'll handle the assignment problem with the Hungarian algorithm (or Kuhn-Munkres algorithm).

Hungarian method for assignment problem Step 1. Subtract the entries of each row by the row minimum. Step 2. Subtract the entries of each column by the column minimum. Step 3. Make an assignment to the zero entries in the resulting matrix. A = M 17 10 15 17 18 M 6 10 20 12 5 M 14 19 12 11 15 M 7 16 21 18 6 M −10

Approach: The idea is to use the Hungarian Algorithm to solve this problem. The algorithm is as follows: For each row of the matrix, find the smallest element and subtract it from every element in its row. Repeat the step 1 for all columns. Cover all zeros in the matrix using the minimum number of horizontal and vertical lines.

The Hungarian method is a combinatorial optimization algorithm which solves the assignment problem in polynomial time . Later it was discovered that it was a primal-dual Simplex method.. It was developed and published by Harold Kuhn in 1955, who gave the name "Hungarian method" because the algorithm was largely based on the earlier works of two Hungarian mathematicians: Denes Konig and Jeno ...

The Hungarian algorithm can be seen as the Successive Shortest Path Algorithm, adapted for the assignment problem. Without going into the details, let's provide an intuition regarding the connection between them. The Successive Path algorithm uses a modified version of Johnson's algorithm as reweighting technique.

The Hungarian Method: The following algorithm applies the above theorem to a given n × n cost matrix to find an optimal assignment. Step 1. Subtract the smallest entry in each row from all the entries of its row. Step 2. Subtract the smallest entry in each column from all the entries of its column. Step 3.

The total time required is then 69 + 37 + 11 + 23 = 140 minutes. All other assignments lead to a larger amount of time required. The Hungarian algorithm can be used to find this optimal assignment. The steps of the Hungarian algorithm can be found here, and an explanation of the Hungarian algorithm based on the example above can be found here.

THE HUNGARIAN METHOD FOR THE ASSIGNMENT. PROBLEM'. H. W. Kuhn. Bryn Y a w College. Assuming that numerical scores are available for the perform- ance of each of n persons on each of n jobs, the "assignment problem" is the quest for an assignment of persons to jobs so that the sum of the. n scores so obtained is as large as possible.

Example 1: Hungarian Method. The Funny Toys Company has four men available for work on four separate jobs. Only one man can work on any one job. The cost of assigning each man to each job is given in the following table. The objective is to assign men to jobs in such a way that the total cost of assignment is minimum. Job.

The assignment problem tackles matching tasks to agents efficiently. It's a special case of transportation problems, with one-to-one matching and equal-sized sets. The cost matrix represents assignment costs, and the goal is to minimize total cost.. The Hungarian algorithm solves assignment problems step-by-step. It creates a reduced cost matrix, finds zeros, and iteratively improves the solution.

Hungarian Algorithm & Python Code Step by Step. In this section, we will show how to use the Hungarian algorithm to solve linear assignment problems and find the minimum combinations in the matrix. Of course, the Hungarian algorithm can also be used to find the maximum combination. Step 0. Prepare Operations.

This process is experimental and the keywords may be updated as the learning algorithm improves. References H.W. Kuhn, On the origin of the Hungarian Method , History of mathematical programming; a collection of personal reminiscences (J.K. Lenstra, A.H.G. Rinnooy Kan, and A. Schrijver, eds.), North Holland, Amsterdam, 1991, pp. 77-81.

The classical solution to the assignment problem is given by the Hungarian or Kuhn-Munkres algorithm, originally proposed by H. W. Kuhn in 1955 [3] and refined by J. Munkres in 1957 [5]. The Hungarian algorithm solves the assignment problem in O(n3) time, where n is the size of one partition of the bipartite graph. This and other

The assignment problem. The assignment problem deals with assigning machines to tasks, workers to jobs, soccer players to positions, and so on. The goal is to determine the optimum assignment that, for example, minimizes the total cost or maximizes the team effectiveness. Read more on the assignment problem.

In this lesson we learn what is an assignment problem and how we can solve it using the Hungarian method.